The Pentagon is playing a high-stakes game of legal chicken with one of the most prominent AI labs in the world, and it isn't looking good for the government's credibility. A U.S. judge is now openly skeptical. He’s asking the questions that most of us in the tech industry have been whispering for months. Why is the Department of Defense suddenly treating Anthropic like a national security pariah?

If you've followed the rise of Claude and the safety-first culture at Anthropic, the government's stance feels like a sudden heel turn. For a long time, Anthropic was the "responsible" child of the AI boom. They talked about constitutional AI. They focused on safety benchmarks. Now, they're caught in a bureaucratic tug-of-war that could redefine how private tech companies interact with military contracts.

The core of the dispute centers on the Pentagon's decision to label Anthropic as a potential security threat, a move that effectively freezes them out of specific lucrative government projects. But here’s the kicker. The judge overseeing the case isn't buying the "national security" blanket defense that the government usually hides behind. He wants receipts.

The Judge pulls back the curtain on the Pentagon

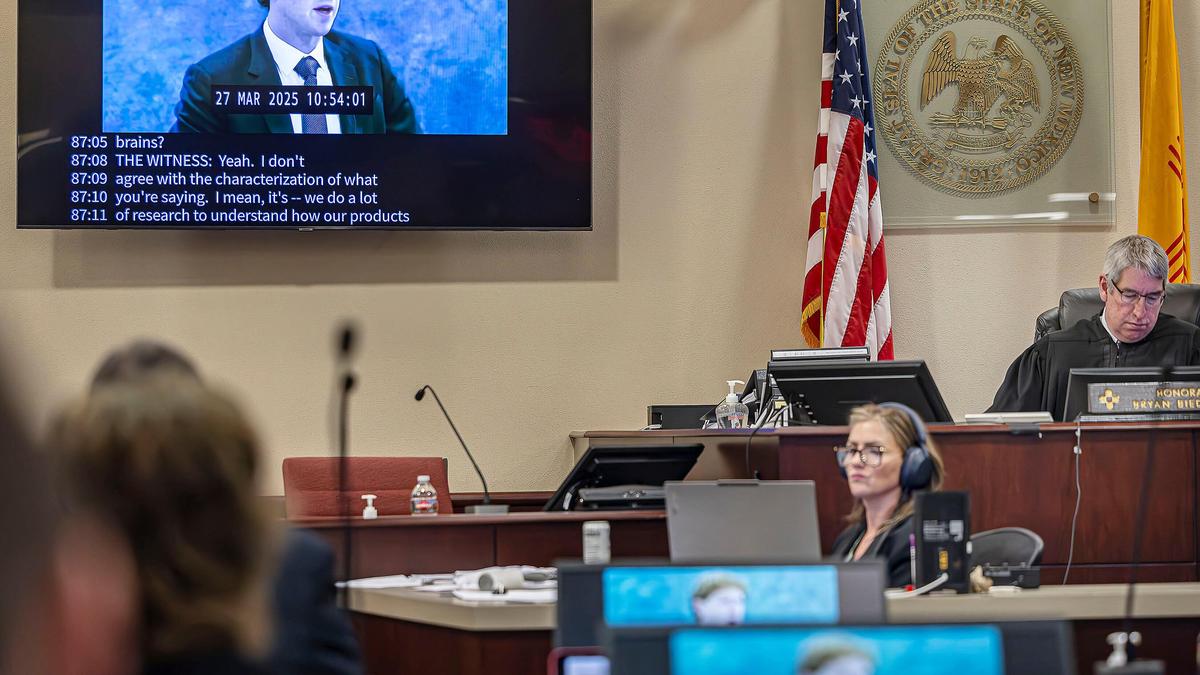

U.S. District Judge Richard Leon isn't known for being a pushover. In recent hearings, he's been grilling the Pentagon’s legal team about the timing and the logic behind their restrictive stance. It’s one thing to say a company poses a risk. It’s another thing entirely to prove that risk outweighs the benefit of using some of the most advanced large language models on the planet.

The Pentagon’s lawyers tried to lean on the usual tropes. They mentioned foreign influence. They hinted at data vulnerabilities. But Leon pushed back. He pointed out that the Department of Defense (DoD) often works with companies that have far more complex international ties than Anthropic. If the rules aren't being applied equally, the "security threat" label starts to look less like a safety measure and more like a political tool or a way to favor legacy defense contractors.

This isn't just about one company’s bottom line. It’s about whether the government can use vague security concerns to pick winners and losers in the AI race. If the Pentagon can arbitrarily blacklist a company like Anthropic without clear, transparent criteria, it creates a chilling effect across the entire Silicon Valley ecosystem. Why would a startup invest billions in safety and alignment if the government can just shut them out of the public sector market on a whim?

Why the security threat label feels like a reach

Anthropic’s whole brand is built on being the "safe" alternative to OpenAI. They’ve pioneered techniques like Constitutional AI, where the model is trained to follow a specific set of ethical principles. They have a massive investment from Amazon and Google, yes, but their governance structure is designed to resist outside pressure that would compromise their mission.

The Pentagon’s argument seems to hinge on the idea that because Anthropic’s models are so powerful, they are inherently dangerous if they fall into the wrong hands or if they are "misaligned." But that applies to every frontier model. By that logic, the DoD shouldn't be using any modern AI.

I’ve talked to engineers who work in this space. They’ll tell you that the real security threat isn't a company like Anthropic. The threat is the U.S. falling behind in AI adoption because our procurement process is stuck in the 1990s. While the Pentagon squabbles in court, other global powers are moving fast. They aren't letting bureaucratic red tape stop them from integrating LLMs into their strategic planning.

The hidden battle over data and control

There’s a deeper layer to this fight that most people are missing. It’s not just about "security" in the sense of spies or hacking. It’s about data sovereignty. The Pentagon wants total control over the weights and the training data of the models it uses. Anthropic, like most private AI labs, guards those weights like the crown jewels.

The DoD is used to a world where they own the hardware and the software. They buy a fighter jet, they own the jet. But AI doesn't work like that. You’re often buying access to a service or a pre-trained model that the company continues to refine. This clash of cultures—the rigid, ownership-heavy military vs. the iterative, service-based tech world—is where the real friction lies.

The "security threat" label is a blunt instrument. It's the loudest way for the Pentagon to say, "We don't like that we can't see inside your black box." But instead of negotiating better terms or technical safeguards, they’ve gone for the nuclear option in court. Judge Leon’s skepticism suggests that this strategy might backfire. If the court finds the Pentagon acted "arbitrarily and capriciously," it could force a massive overhaul of how the military vets tech partners.

The legacy contractor problem

We can't ignore the role of the "Old Guard." Companies like Raytheon, Lockheed Martin, and Northrop Grumman have spent decades building relationships within the Pentagon. They know how to navigate the paperwork. They also see the rise of AI labs as a direct threat to their dominance.

When a company like Anthropic or OpenAI enters the fray, they’re offering capabilities that traditional defense contractors can’t match—at least not yet. It’s in the best interest of the legacy players to whisper in the ears of procurement officers about the "risks" of these new, unvetted Silicon Valley upstarts. Whether or not that’s happening here is up for debate, but the optics certainly suggest a bias toward the established players.

How this affects the broader AI market

If the court rules against the Pentagon, it’s a massive win for transparency. It means the government has to provide a clear roadmap for what constitutes a "safe" AI partner. We need those rules. Right now, it’s all vibes and secret memos.

For you as a developer, investor, or tech leader, this case is a bellwether. It tells us whether the "dual-use" nature of AI—the fact that it can be used for both civilian and military purposes—is going to be a bridge or a wall. If it’s a wall, we’re going to see a split in the industry. You’ll have "defense-only" AI companies and "civilian" AI companies, with very little overlap. That would be a disaster for innovation.

The best tech usually comes from the commercial sector and then trickles into specialized fields. If we silo AI development because of vague security fears, both the military and the public lose out.

What happens if Anthropic wins

A win for Anthropic doesn't just mean they get to bid on contracts again. It means the judiciary is finally stepping in to check the executive branch's power over the tech industry. For too long, "national security" has been a "get out of jail free" card for the government when it wants to avoid explaining its decisions.

Judge Leon is essentially saying that even the Pentagon has to follow the law. They have to have a rational basis for their decisions. If they can’t point to a specific, credible reason why Anthropic is more dangerous than, say, a traditional cloud provider or a foreign-linked hardware manufacturer, then the label has to go.

Steps for tech companies navigating government scrutiny

- Audit your international ties. Even if you think your funding is clean, the government will dig into every seed investor and board member. Be ready to explain those relationships before they become a headline.

- Invest in "Air-Gapped" solutions. The military wants tech that can run without an internet connection. If you can’t offer a version of your model that lives entirely on their servers, you’re going to face an uphill battle.

- Document your safety protocols. Anthropic’s focus on safety is actually their best defense. They can point to years of research that proves they take risk seriously. If you're a smaller player, start building that paper trail now.

- Hire people who speak "Pentagon." You need experts who understand the Federal Acquisition Regulation (FAR). You can't just send a 24-year-old product manager to a meeting at the DoD and expect it to go well.

This battle is far from over. The Pentagon will likely appeal any ruling that goes against them, citing the risk of setting a precedent that limits their power. But the fact that a judge is even asking these questions is a sign that the era of "trust us, it's a security issue" is ending. The government needs to catch up to the reality of the 2026 tech landscape, or they’ll find themselves defending their motives in court while the rest of the world moves on without them.

The next move is on the DoD. They either provide the evidence Judge Leon is looking for, or they admit that the "security threat" label was a convenient excuse to keep a disruptive player out of their backyard. Honestly, after watching this unfold, it’s hard to see it any other way. Keep an eye on the court filings over the next month. They’ll tell you everything you need to know about the future of AI in the public sector. Use this time to tighten your own compliance and transparency measures. The bar for working with the government just got a lot higher, even if the government itself can't quite explain why.

Wait for the official ruling before you pivot your government sales strategy. If Anthropic clears this hurdle, the floodgates for "frontier" AI in defense will likely swing wide open. Be ready to move when they do.